Your Tribe, Electrified

Every group chat is its own economy. We are only beginning to understand what that means.

I.

We have always organized ourselves in small groups before we organized ourselves into anything larger. The tribe preceded the city-state; the guild preceded the corporation; the congregation preceded the denomination. What looks, from a distance, like civilization is, up close, an accretion of intimate circles, each containing its own norms, its own memory, and its own implicit economy of trust and obligation. And then, when those circles have sought to grow—to extend their reach across time, space, and strangers—we built institutions to carry the load: laws to encode the norms, currencies to transfer the obligation, contracts to make the trust legible to people who had never met.

The question that every new organizational technology reopens is not whether humans will form these circles—they always do—but what shape the circles will take, and what they will be able to do together.

The group chat is now one answer to that question. While its form-factor has been around for a while, most people haven’t registered that it’s beginning to encompass something considerably more consequential: a unit of economic organization. Not a metaphor for one. An actual one.

When a group of people can share a wallet, delegate to shared agents, hold assets collectively, and govern their own rules of interaction—all within the same interface where they talk—the group chat stops being a messaging app and starts being an institution. A small one. A personal one. But an institution nonetheless, with the same essential properties that have always made institutions matter: the capacity to coordinate action, pool resources, and potentially extend trust beyond what any individual could sustain alone.

II.

To understand why this matters, it helps to understand what group chats have had to give up to exist. Every major messaging platform of the last two decades has been built on a bargain that users accepted without quite realizing it: the platform provides the infrastructure of connection, and in exchange, the group surrenders its autonomy. The platform sets the rules. The platform holds the data. The platform decides what is permissible. The tribe is electrified, but the electricity runs through someone else’s grid.

The consequences run deeper than the standard privacy critique. It is not only that messages can be read, or that metadata is harvested. It is that the group, as an entity, has no standing—no capacity to own, to commit, to act collectively in any domain beyond conversation. The infrastructure of connection is present; the infrastructure of coordination is absent. The group can talk. It cannot do.

The identity architecture underlying these platforms reinforces this dependency. Digital identity, as we have built it over two decades, is fundamentally a system of permanent attribution on static data—phone numbers tied to SIM cards tied to government IDs, email verification loops, biometric enrollments. Each innovation promised the certainty of in-person recognition extended to digital space. Each also created a permanent record of association: who you talked to, when, and what you said. The group chat became a legible artifact, one that could be leaked, subpoenaed, screenshot, forwarded. When Pete Hegseth’s Signal messages became public, the damage was not that the encryption had failed—it hadn’t. The damage was that the attribution was perfect. Everyone knew exactly who had said what.

What we conflated, in building this architecture, were two distinct problems. The first is proving that someone is human—or more precisely, that they are who they claim to be. The second is tracking everything they subsequently say. These are not the same problem, and solving the first does not require doing the second. We have simply built systems that do both simultaneously, because that was easier, or because the parties with the most to gain from permanent attribution—platforms, advertisers, governments—often had more influence over the design than the users who pay the costs.

III.

The only undefeated method of verifying that someone is who they claim to be remains meeting them. Not their email address, not their iris scan—them. In physical space, making eye contact, hearing their voice. This is not nostalgia. It’s a recognition that millennia of human social architecture were built on human connection, and that the elaborate substitutes we have constructed carry costs that are still being reckoned.

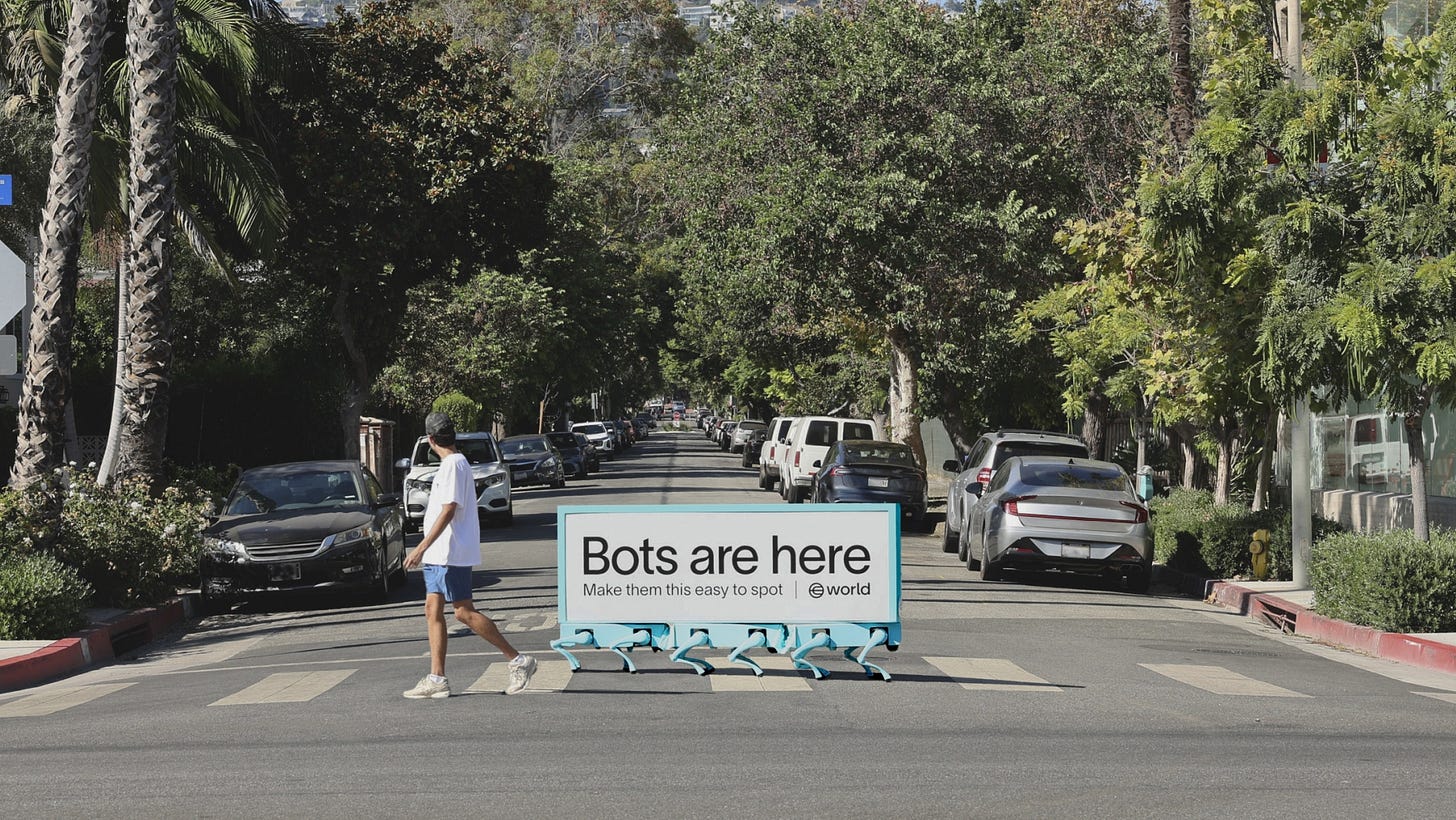

The World app’s recent billboard—a robot walking through cities carrying the message “Bots are here. Make them this easy to spot”—gestures toward a real problem. We do need to distinguish between humans and bots, and the need becomes even more acute, not less, as AI agents proliferate. An agent booking a flight on your behalf, a delegated bot posting under your account that you home-rolled with OpenClaw, an AI system authorized to execute transactions in your name: each of these requires a clear answer to the question of who, ultimately, is responsible. The demand for robust and persistent verification is legitimate. World ID, Self Protocol, and their counterparts are responding to something genuine with interesting technical solutions.

But here is what the billboard logic misses: stronger verification does not reduce the need for ephemerality. Oddly, it may enable it. The more reliably we can establish who is present and who has authorized what, the more safely we can allow what follows to dissolve. Verification and disappearance do not have to be in tension. They can be symbiotic. You can build a system where conversations go poof if you are confident about who was in the room. The Chatham House Rule isn’t meant for a room full of strangers; it works precisely because everyone present is accountable as a member or has bought into the gathering. Off the record doesn’t need to mean random or spammy.

What would it look like to verify at the moment of meeting or invitation—strongly, using the oldest human method or its cryptographic equivalent—and then allow everything that follows to be optionally attributed? Harvard’s Berkman Klein Center developed Nymspace some years ago for exactly this logic, in institutional settings where it serves obvious needs: classrooms, committees, workshops. Each participant receives a unique pseudonymous identifier that persists within a discussion thread. You know you are in conversation with your colleagues—people whose presence you can verify—but you cannot tell which specific person wrote which comment. The group is known; the attribution is variable.

The feedback from Nymspace users is instructive. “Nothing about you but your ideas matter,” one wrote. Another: “there is greater distribution of contribution and sense of freedom in discussion when typed anonymously online within a known context of people.” That phrase—“a known context of people”—is the operative one. This is not the anonymity of a comment section. It is the anonymity with consent of a Chatham House session: everyone in the room is accountable as a member of the group; what floats free is the attribution of individual statements. The medium has changed what people are willing to say—or more precisely, it has restored a kind of conversation that used to happen around tables and has been progressively supplanted by the logic of the permanent record.

IV.

Every group chat is its own economy. This is the claim that, once you see it, is difficult to unsee.

The word “economy” comes from the Greek “oikonomia,” meaning the management of a household. The household was, for most of human history, the primary unit of economic life: the place where production and consumption, labor and resource allocation, trust and obligation, were organized. The modern economy abstracted these functions upward, into firms and markets and states, in ways that enormously expanded their scale and efficiency, and that also severed a lot of the connection between economic activity and human relationship. You do not need to know or trust the person on the other side of an arm’s-length transaction. The institution—the contract, the currency, the court—does the work that personal connection used to do.

What is becoming possible is not a return to the household economy—it is more: the technical realization of what the household economy always was, extended to groups constituted by choice rather than birth, operating at digital speed, and equipped with instruments of coordination that no household in history has possessed.

A group chat that can hold a shared wallet is a group that can pool resources without a bank account, without a corporate entity, without a legal jurisdiction. A group chat that can deploy shared agents—AI systems acting on the group’s behalf, authorized by the group’s members, operating within parameters the group has set—is a group that can act in the world without requiring any individual member to act alone. A group chat with its own identity infrastructure—verified membership, optional attribution, portable credentials—is a group that can present itself to other groups and to the wider world on its own terms, rather than as an artifact of some platform’s data model.

Consider the range of what this makes conceivable. A racket club booking courts, splitting tournament fees and organizing travel without a treasurer or a Venmo chain. A family managing shared obligations—care for an aging parent, a collectively owned property, the extracurriculars of children—with instruments that reflect the actual complexity of family economics rather than flattening it into individual accounts. A professional cohort sharing deal flow, due diligence, and capital allocation within a trusted circle, without the legal overhead of a fund. A neighborhood group collectively negotiating with vendors, coordinating mutual aid, holding a shared reserve. A diaspora community maintaining economic connection across borders without the friction of correspondent banking.

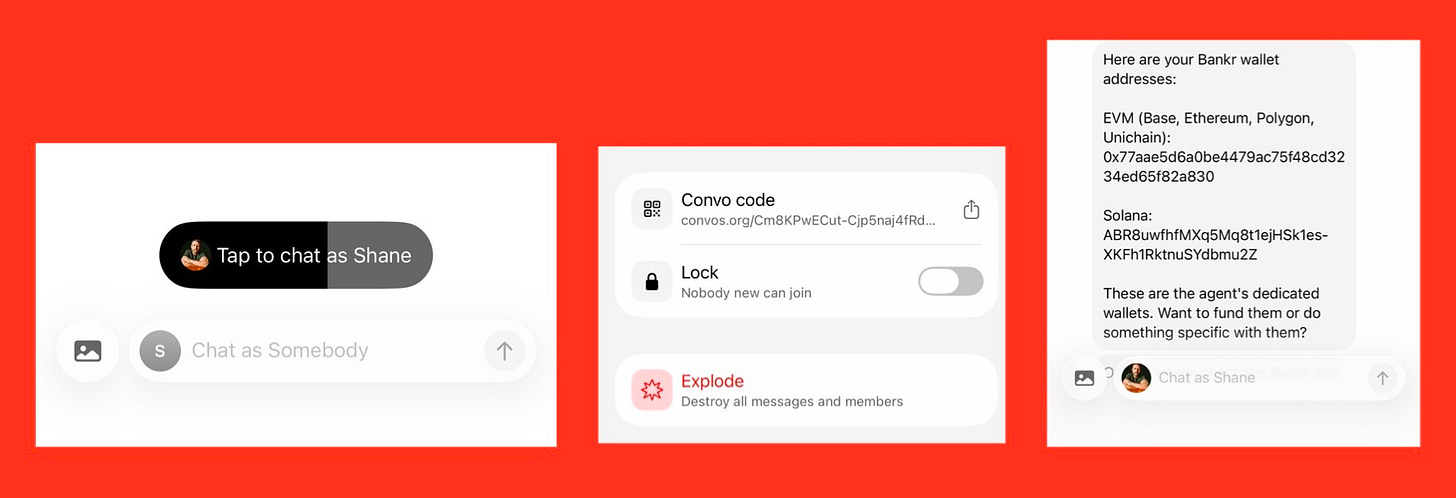

These are not exotic use cases. They are what people have always wanted to do with their trusted circles. What has been missing is the infrastructure to do it at the speed and scale of digital life, without surrendering control to an intermediary. Convos, built on the XMTP open messaging protocol, is an early example of what that infrastructure looks like in practice: group chats that begin with in-person verification or a shared invite link, support optional attribution in incognito mode, and are beginning to incorporate shared wallets and agent delegation. The conversations can disappear; the economic capability persists. When I finished my first conversation on it, the founder blew up the thread. The content was gone. The trust that had been established—the fact of the meeting, the tone, the relationship—was not. That asymmetry, trivial-seeming in a single exchange, is the architectural premise of a different kind of group.

V.

The agent question is where this becomes both more powerful and more vertiginous. We are entering a period in which AI agents will operate with increasing autonomy on behalf of individuals and groups — booking travel, executing transactions, managing communications, making decisions within delegated parameters. The question of how these agents are authorized, and how their actions are attributed, is not primarily a technical question. It is an institutional one.

The spectrum that results is richer than the binary of human versus bot that most current discourse assumes. There are first-person actions, where you are genuinely present; second-person, where an agent acts under your explicit authorization; and third-person, where an agent acts autonomously with no live human in the loop. The grammar of that distinction, it turns out, has real institutional consequences. Each of these requires a different attribution treatment. The delegated agent needs a clear chain of authorization. The zero-knowledge agent — one that appears, executes its task and then disappears, leaving no record except the outcome — needs precisely the kind of verified-group context that makes its disappearance safe rather than suspicious.

A group that can govern its own agents has capabilities that no previous informal institution has possessed. The agent is an extension of the group’s will into domains where the group cannot be physically present. It can act continuously, at scale, with precision. It can hold credentials the group has delegated and relinquish them when the delegation expires. And because the group’s membership is verified—because everyone in the circle is essentially consented, even if not every statement is attributed—the group can authorize its agents with genuine accountability. Poof, again, leans on provenance.

It is worth being precise about what kind of institution this is, and what it is not. Ronald Coase’s insight, in his 1937 essay on the nature of the firm, was that firms exist because organizing internally is sometimes cheaper than transacting through markets. The boundary of the firm sits wherever that calculus tips. Enterprise software—the stack that runs everything from Salesforce to Slack to Box—has spent three decades optimizing coordination within firms whose boundaries were already fixed: the legal entity, the org chart, the credentialed employee. Aaron Levie and his peers at these SaaS players are now racing to capture the AI agent-enhanced enterprise context, and they will build impressive and valuable things within that frame. The enterprise group chat, enriched with agents and shared context, is a real and important market.

But the Coasian question that enterprise software cannot answer is the one about formation costs. Coase assumed the firm already existed; he was explaining its internal logic. While there’s increasingly chatter about “zero-employee” or “zero-human” companies, there’s less acknowledgement of the similarly-likely small and mighty group empowered with agents. What happens when the transaction costs of forming a firm in the first place—the legal fees, the governance overhead, the credentialing, the compliance burden—approach zero? When a trusted circle of people can spin up the functional equivalent of a small firm inside a group chat, act together for a season or a purpose, and dissolve without leaving a legal residue? That is not a smaller enterprise. It is a different organizational form: one that maps onto the actual texture of how people already trust each other, rather than onto the abstractions that formal institutions require. The enterprise context is valuable territory. The personal and tribal context is different territory—and in some ways more fundamental, because it is where trust originates before institutions arrive to codify it.

Private group context, in this light, is not just valuable to the group’s members. It is the most valuable context in the world for the agents that serve them. An agent that understands the history, norms, relationships, and objectives of a particular group can act with a specificity and appropriateness that no general-purpose system can match. The group’s context is the agent’s most relevant training data, continuously updated, perpetually relevant. The group is not just an economic unit. It is an epistemic one.

VI.

In 1964, the media theorist Marshall McLuhan argued that the electric light was the purest example of a medium without a message: a technology with no content except the transformation it worked on human behavior. The light itself did not matter. What mattered was that night became day, that human activity was no longer bounded by the sun, that new patterns of life emerged from the sheer extension of luminosity. The medium was the message because the medium reorganized everything else.

The group chat as economic unit is beginning to look like this kind of medium. Its content—the conversations that pass through it—matters less than what it reorganizes. When trust can be verified at the moment of connection and then allowed to operate without permanent attribution, the group’s internal life changes. When the group can hold assets and deploy agents, its relationship to the external world changes. When these capabilities compound, the group becomes something qualitatively different from what it was: a consented tribe, electrified.

I gave a TED talk some years ago on blockchain as a technological institution—a way of extending the reach of transactions beyond what prior institutions could manage, into a space where humans and machines might share the same infrastructure. The argument was about scale: how do you take the trust that works in small groups and extend it to strangers, to machines, to a global economy? Blockchain was one answer: a shared programmable state machine that substitutes for shared relationship. I imagined the future as a world filled with Distributed Autonomous Institutions and encoded governance.

What I did not fully anticipate was the possibility of going in the other direction: not scaling the trust of small groups outward into abstraction through code, but also making the small group itself more capable. Not replacing tribal economy with institutional economy, but equipping the tribe with institutional tools. The campfire turned into a light bulb—not because it illuminates more territory, but because it gives the people around it new powers.

The question of what those powers will produce is genuinely open. Groups with wallets, agents, and optional attribution are groups that can experiment with forms of collective action that have never previously been practical. Some of those experiments will be mundane: splitting a dinner bill with less friction; shooting the shit more privately or candidly online. Some will be significant: enabling economic coordination for communities that have been systematically excluded from formal financial infrastructure. Some will be ungovernable: creating new modalities of collective behavior whose consequences no one has thought through.

The tribe, when electrified, does not only warm the people within it. It changes what is possible in the dark.

NB: I haven’t figured out the best way to accurately attribute when I leverage AI tools for a mixture of debating or collaborating on my ideas, especially when revisiting old drafts or versions across longer time lapses. For now, I will just say that various AI tools were used for re-drafting and editing portions of this essay, including Claude Sonnet 4.6 by Anthropic (2025), though I retain control over the final content and any mistakes. Taking suggestions for better attribution methods in phased work if you have any.